- Blog

- Best bathroom design software free

- Hack get random de dragon city

- Download free complete porn movies hd

- Download the new version for windows Cake Blast - Match 3 Puzzle Game

- Download the new Roman Empire Free

- Instal Aiseesoft Slideshow Creator 1-0-60 free

- Minecraft small city map 1-12-2

- Spotify mod with offline download

- Download cadence of hyrule dlc for free

- Download mk 11 ultimate pc

- What does uwu mean

- Download free gtfo price

- Deep rock galactic xbox download free

- Donut county full game download free

- Free download infamous 2009

- Mocha pro not showing in after effects

- Officesuite apk

- Debian install mysql server

- Prime music unlimited

- Flick r

- Mafia ii definitive edition download free

- Download antichamber ps4

- Download free f1 2011

- Affinity photo workbook amazon

- Shapr3d macbook pro

- Wondershare pdfelement 8 download

- Sonic 3 and knuckles steam free download

- Windows 10 virtual audio device

- Burp suite professional license key file crack

- Download quicktime windows 10 64 bit

- How to stop all running programs on windows 10

- Python 3 download

- Topaz video enhance ai free

- Pc booster app for windows 10

- Kaspersky security cloud free 2021

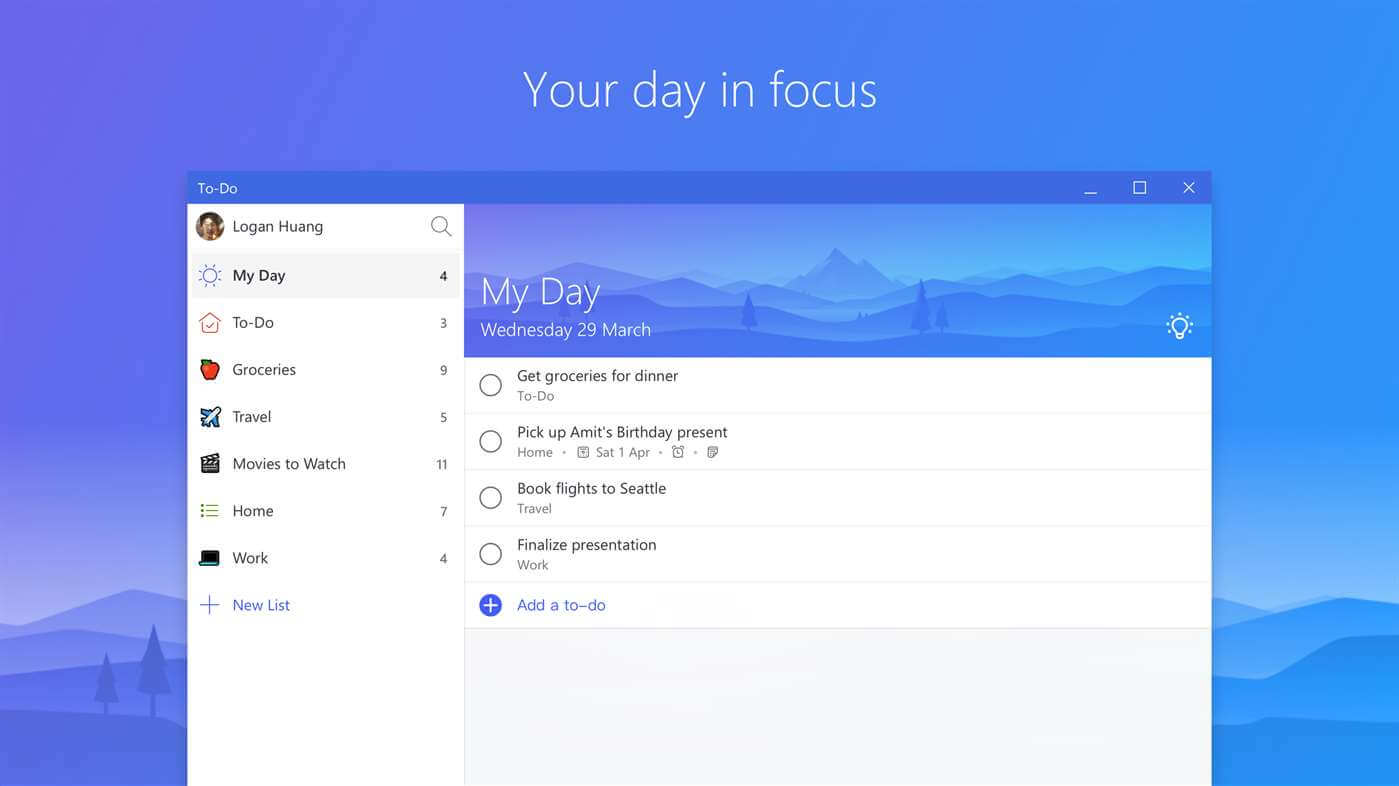

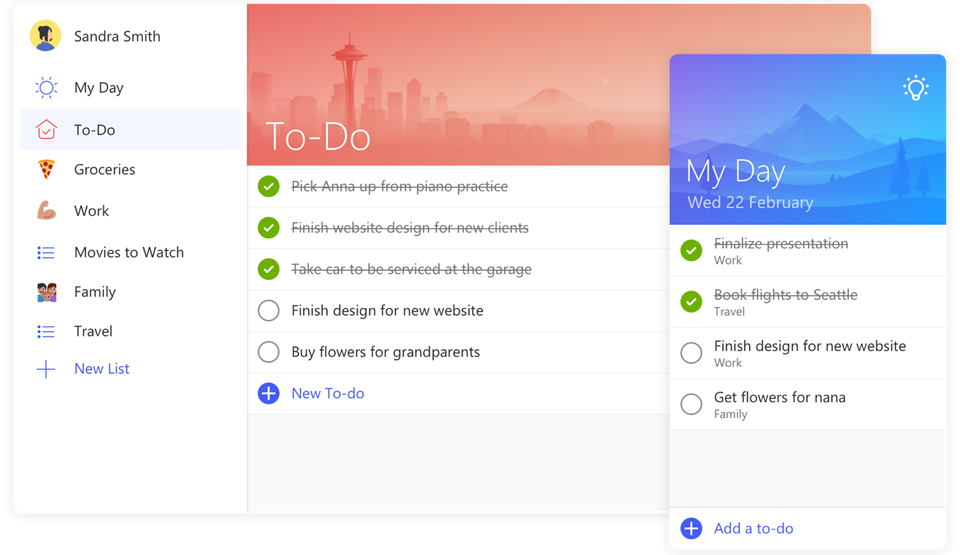

- Microsoft to dos

- Kine master mod apk download

These people had volunteered to test the products, but it had never remotely occurred to them that their data could end up leaking in this way. Late last year, we published a bombshell story about how sensitive images of people collected by Roomba vacuum cleaners ended up leaking online. Roomba testers feel misled after intimate images ended up on Facebook Microsoft has already said it will use OpenAI’s text-to-image generator DALL-E to create images for PowerPoint presentations too. They could also give email programs and Word better autocomplete tools, he adds. Language models could be integrated into Word to make it easier for people to summarize reports, write proposals, or generate ideas, Shah says. ChatGPT’s potential to help people write more fluently and more quickly could be Microsoft’s killer application.

In the meantime, it’s more likely that we are going to see apps such as Outlook and Office get an AI injection, says Shah. But it’s not clear how that will work in practice, and accurate results will be crucial if Microsoft wants people to stop “googling” things. When asked, OpenAI was cryptic about how it trains its models to be more accurate. A spokesperson said that ChatGPT was a research demo, and that it’s updated on the basis of real-world feedback. People might not even notice when these AI systems generate biased content or misinformation-and then end up spreading it further, he adds.

A chat AI like ChatGPT removes that “human assessment” layer and forces people to take results at face value, says Chirag Shah, a computer science professor at the University of Washington who specializes in search engines. When people search for information online today, they are presented with an array of options, and they can judge for themselves which results are reliable.

- Blog

- Best bathroom design software free

- Hack get random de dragon city

- Download free complete porn movies hd

- Download the new version for windows Cake Blast - Match 3 Puzzle Game

- Download the new Roman Empire Free

- Instal Aiseesoft Slideshow Creator 1-0-60 free

- Minecraft small city map 1-12-2

- Spotify mod with offline download

- Download cadence of hyrule dlc for free

- Download mk 11 ultimate pc

- What does uwu mean

- Download free gtfo price

- Deep rock galactic xbox download free

- Donut county full game download free

- Free download infamous 2009

- Mocha pro not showing in after effects

- Officesuite apk

- Debian install mysql server

- Prime music unlimited

- Flick r

- Mafia ii definitive edition download free

- Download antichamber ps4

- Download free f1 2011

- Affinity photo workbook amazon

- Shapr3d macbook pro

- Wondershare pdfelement 8 download

- Sonic 3 and knuckles steam free download

- Windows 10 virtual audio device

- Burp suite professional license key file crack

- Download quicktime windows 10 64 bit

- How to stop all running programs on windows 10

- Python 3 download

- Topaz video enhance ai free

- Pc booster app for windows 10

- Kaspersky security cloud free 2021

- Microsoft to dos

- Kine master mod apk download